GLM-Image Resources

Latest posts and updates about GLM-Image

Latest posts and updates about GLM-Image

Generating images via GLM-Image using the official Z.ai API—includes curl and Python examples, sizing rules, quality modes, and best practices.

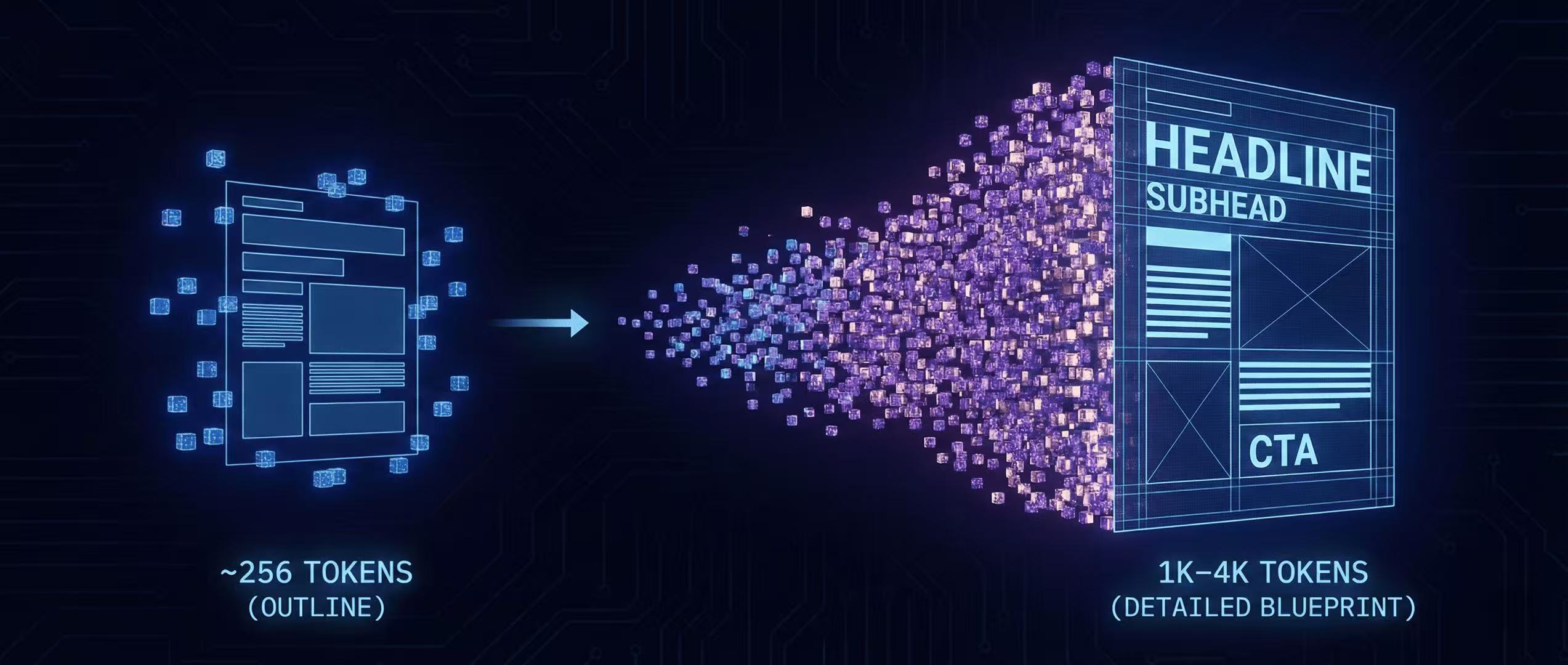

GLM-Image generates image tokens autoregressively—starting from ~256 tokens and expanding to 1K–4K. Here's what that means for layouts, typography, and control.

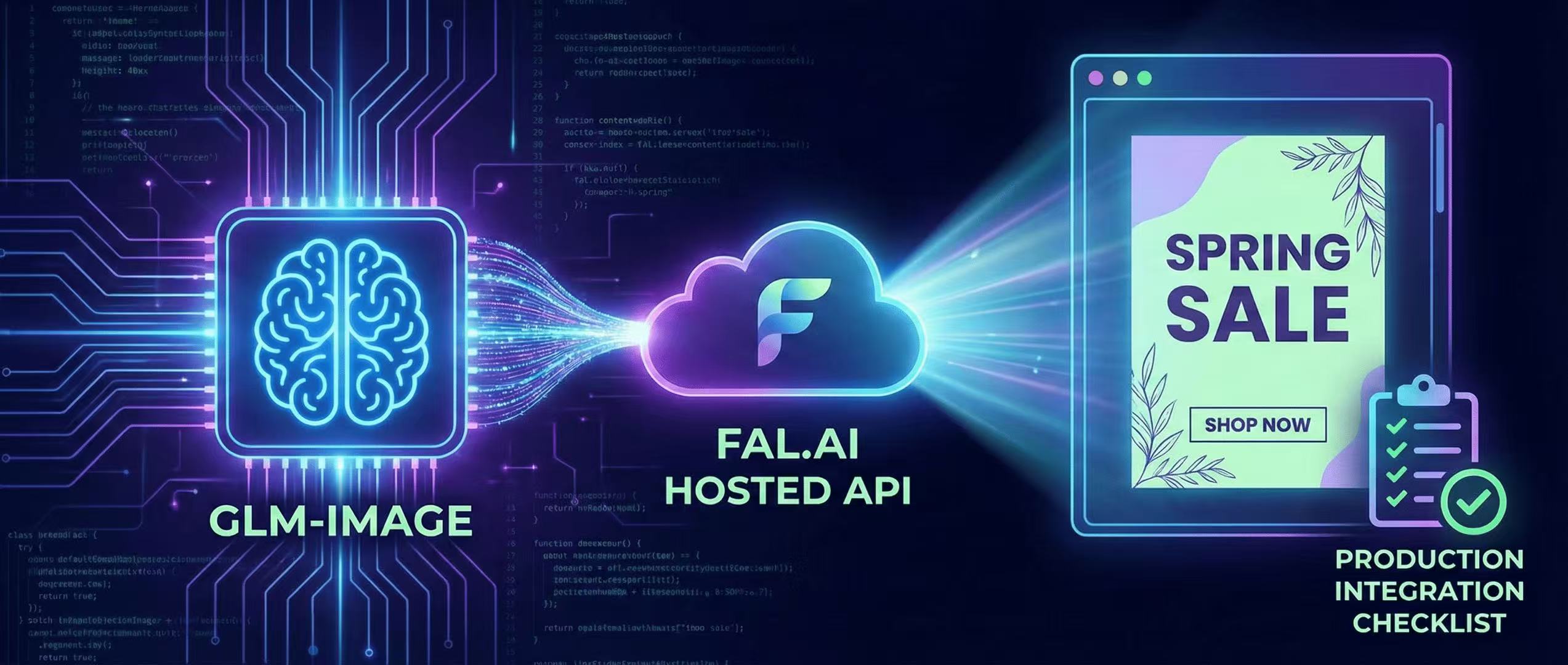

Deploy GLM-Image without managing GPUs—fal.ai API examples, latency considerations, and a production checklist.

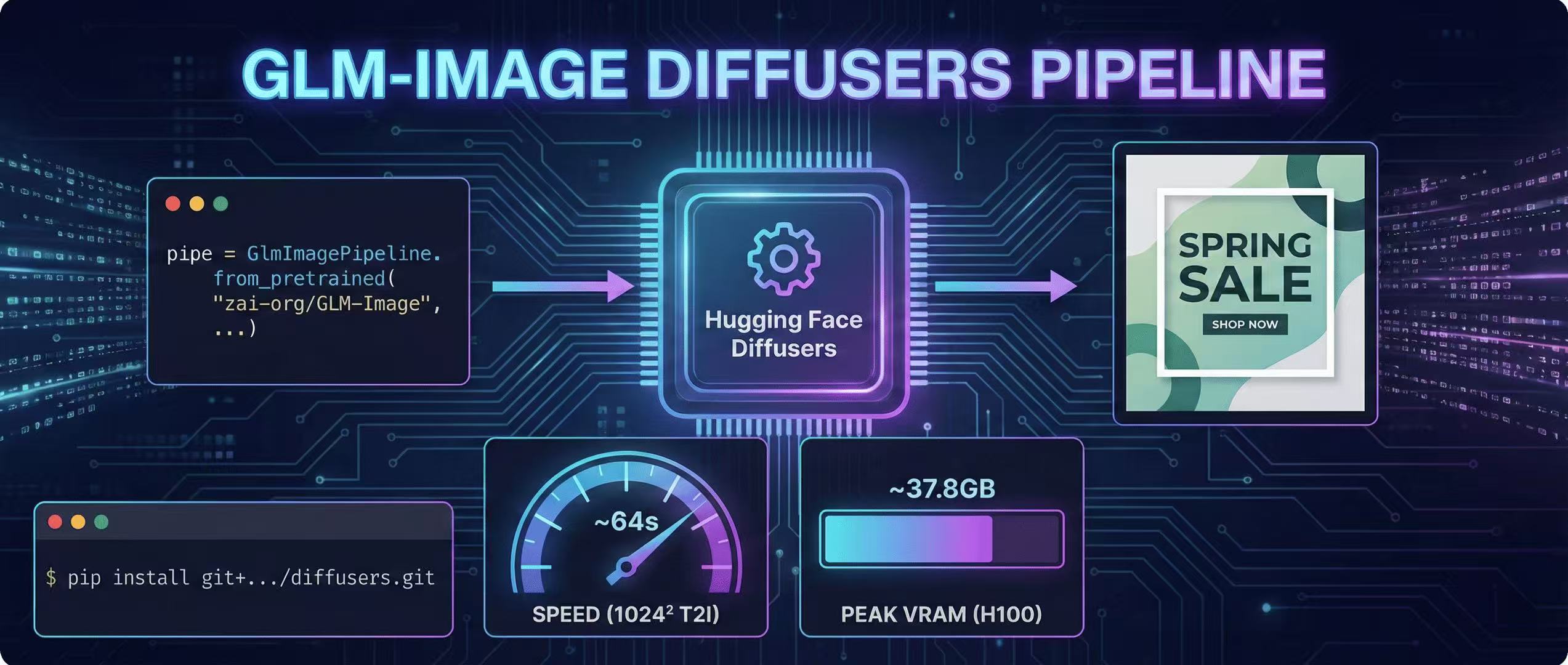

A step-by-step GLM-Image guide using Hugging Face Diffusers, including install, code, and real VRAM/time estimates.

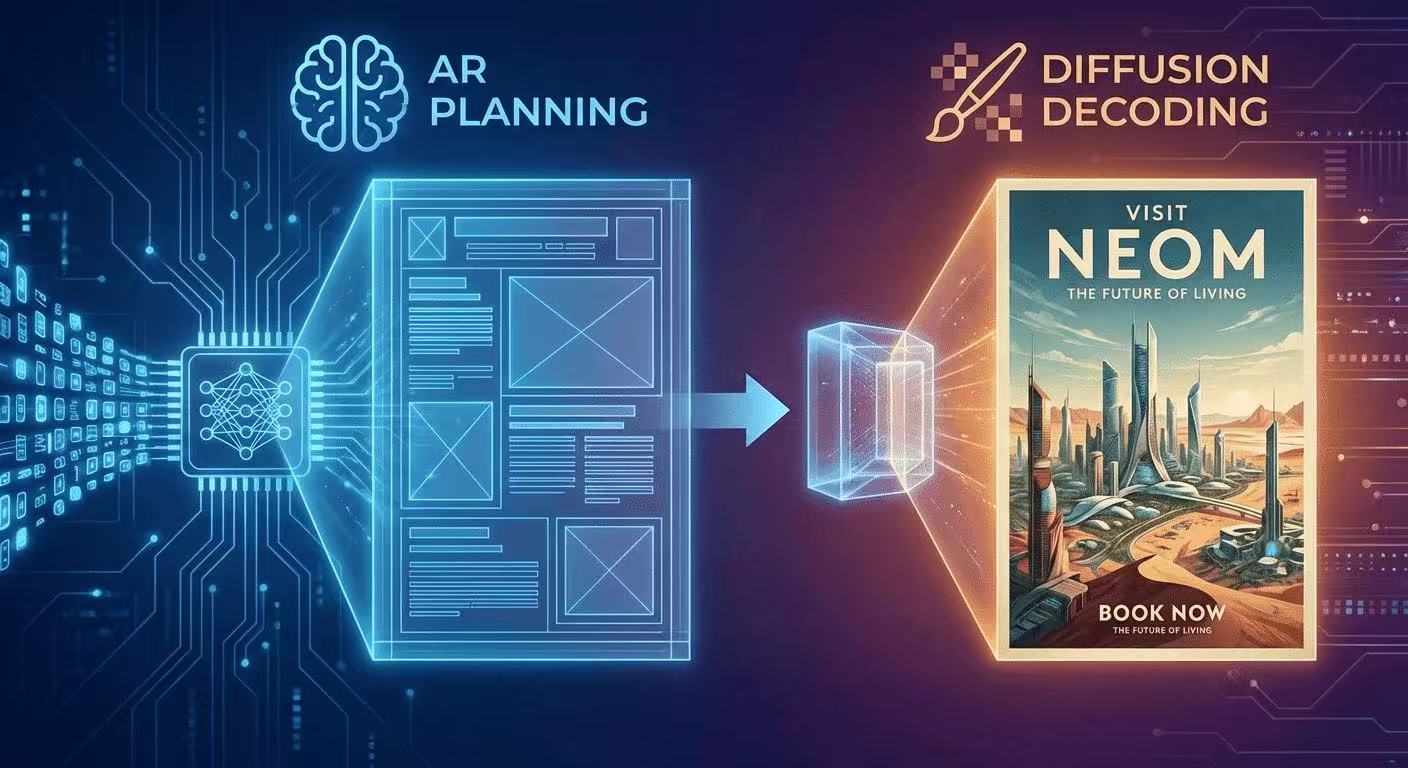

GLM-Image uses autoregressive planning for layout + diffusion decoding for pixel fidelity. Here's the intuition, diagrams, and what it means for text rendering.