2026/01/24

How to Run GLM-Image Locally with Diffusers: A Step-by-Step Guide

Everything you need to set up GLM-Image on your own hardware using the Diffusers library. VRAM requirements and optimization tips.

Running GLM-Image locally gives you ultimate privacy and no generation limits. Here is how to set it up.

VRAM Requirements

- Recommended: 24GB (RTX 3090/4090)

- Minimum: 16GB (with quantization)

Setup Steps

- Install

diffusers,transformers, andaccelerate. - Download the weights from Hugging Face or Z.ai.

- Use

torch.float16to save memory.

from diffusers import GLMImagePipeline

pipe = GLMImagePipeline.from_pretrained("z.ai/glm-image-v1")Performance Tips

Enable CPU offloading if you are tight on VRAM.

More Posts

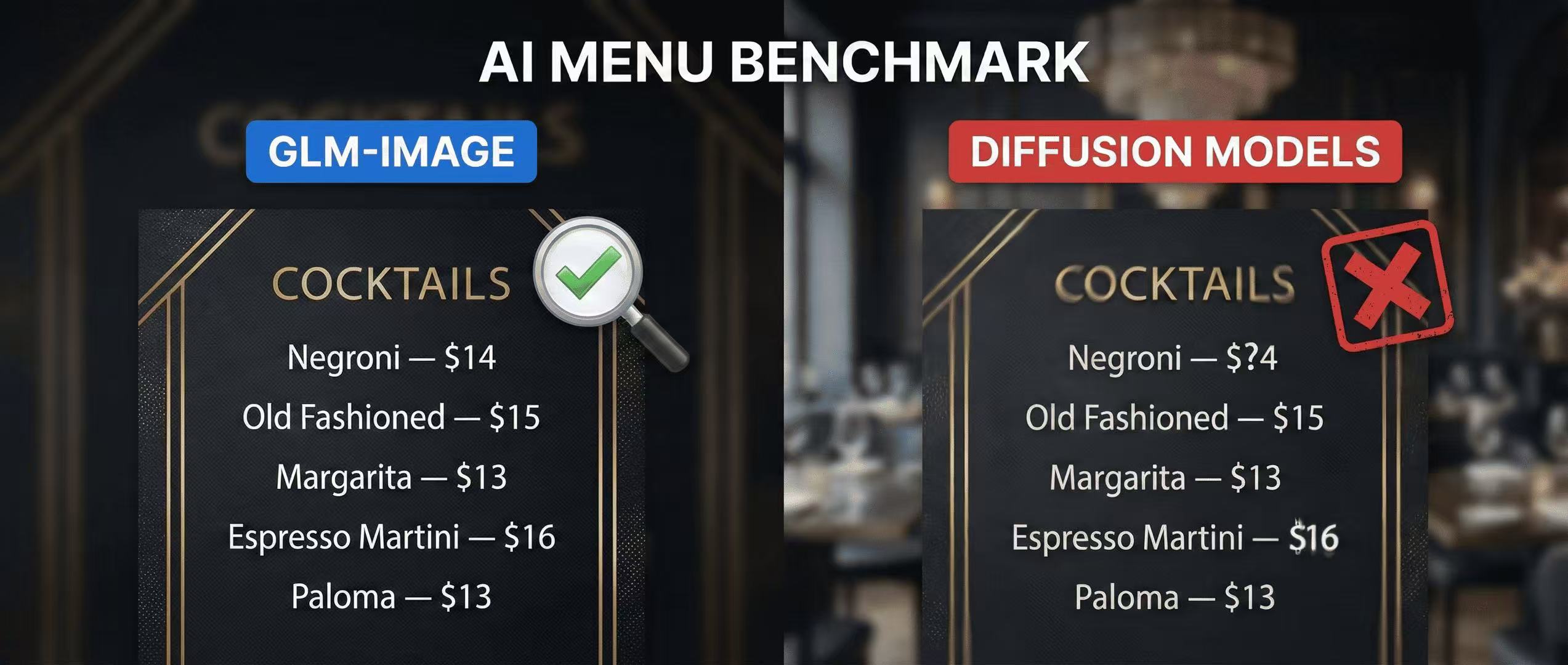

Menu Test: Why GLM-Image Beats Diffusion Models at Legible Pricing

A practical menu benchmark you can run at home—testing price readability, alignment, and typography using GLM-Image with a clear scoring rubric.

GLM-Image for Interior Design: Visualizing Spaces with Text

Why interior designers are using GLM-Image to include specific material labels and dimensional callouts in their renders.

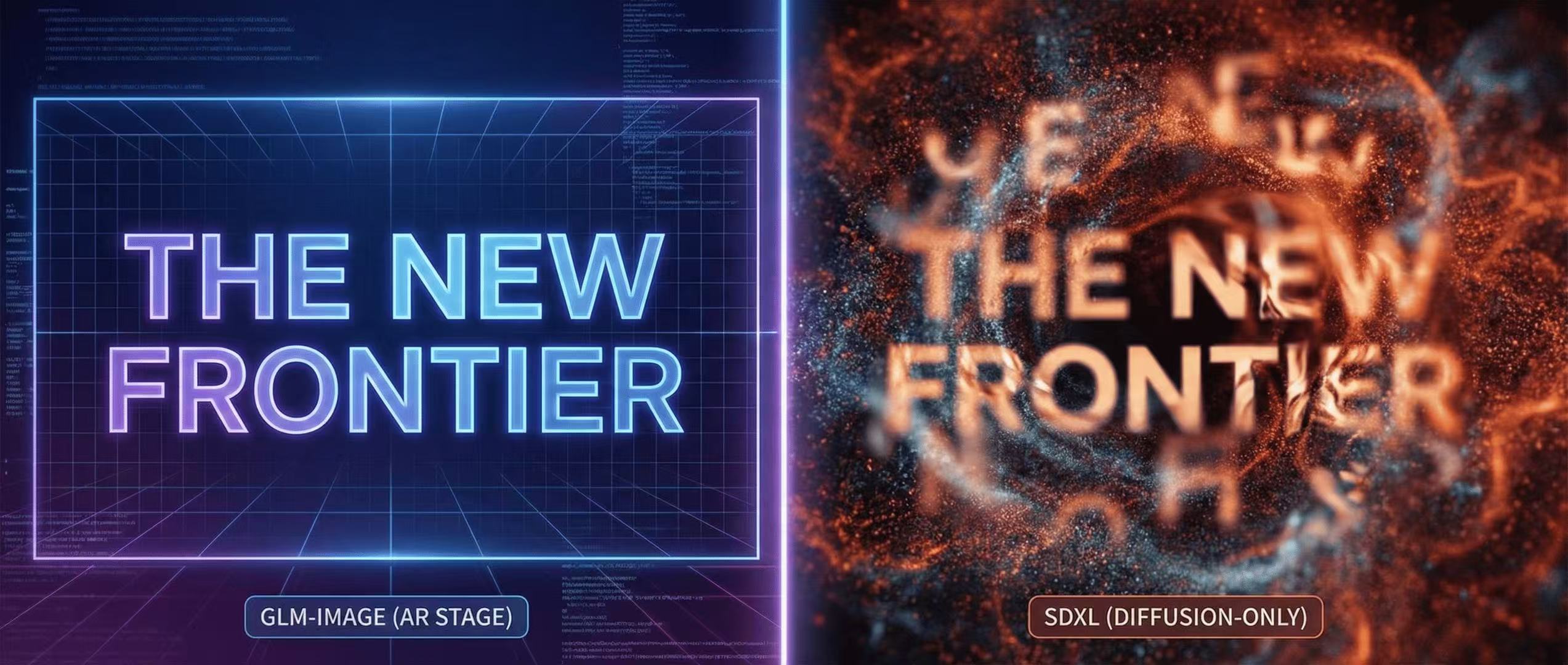

GLM-Image vs SDXL: Why Text Rendering is the New Frontier

A side-by-side comparison of text fidelity in complex layout generation. See why GLM-Image's AR stage outperforms traditional diffusion-only models.